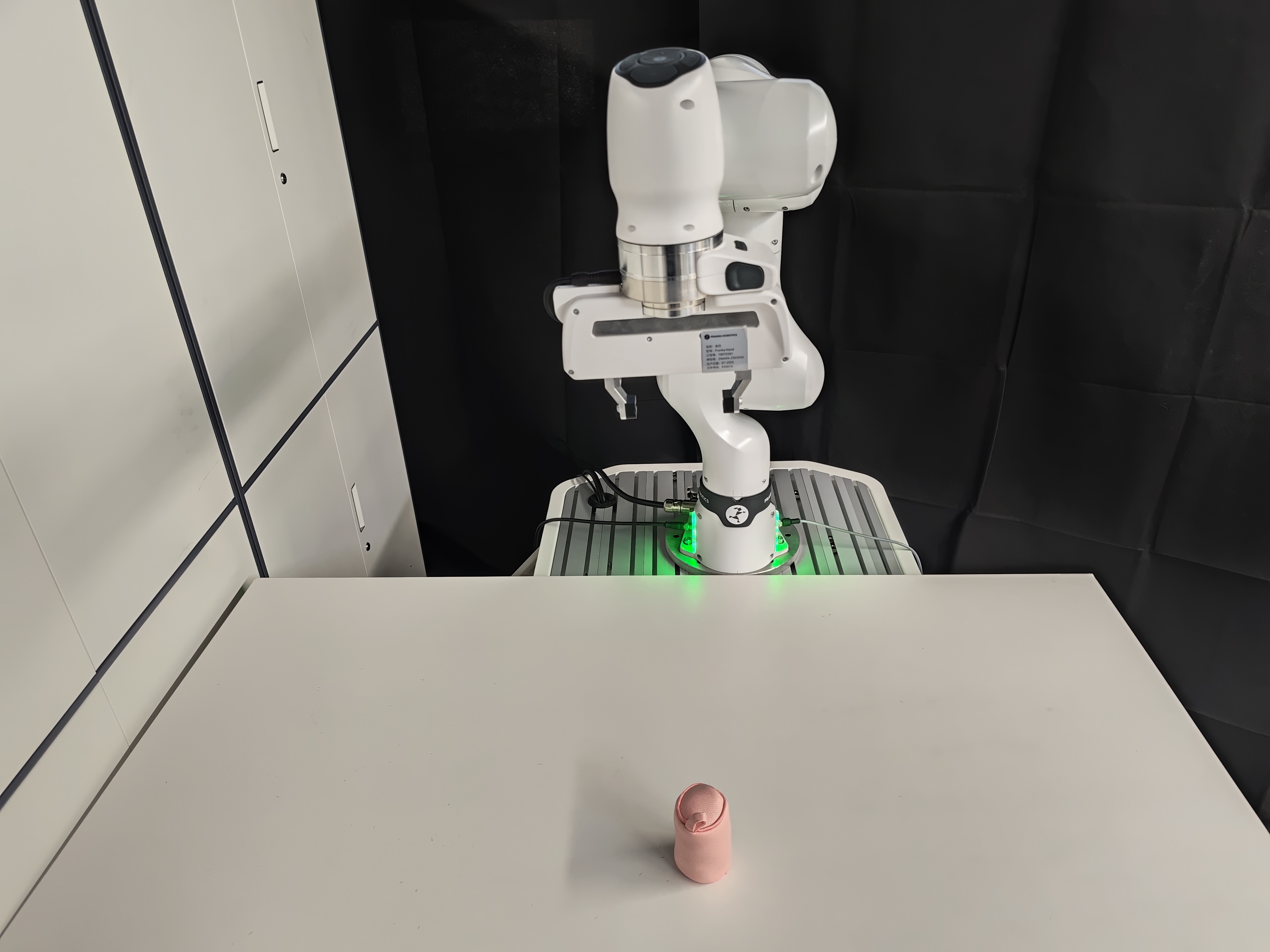

Experimental Setup

Front view

Side view

Task Description

We evaluate our method on the Pick-and-Release task in a real-world setting. In this task, the robot is required to grasp a soft bag whose diameter is approximately 80% of the gripper's jaw width. This scenario poses a challenge to the robot's real-time manipulation capabilities, particularly in maintaining a stable operating posture and precisely coordinating the timing of the gripper's opening and closing in high speed, as the bag is prone to toppling during execution.

Hardware Setup

- Robot Arm: Franka Research 3

- Camera: UGREEN CM717

- GPU: NVIDIA GeForce RTX 4090D

Qualitative Comparisons

Comparisons of different acceleration methods on the Pick-and-Release task. BAC achieves high inference frequency with low end-to-end latency.